Automation is reshaping how data teams work. In 2026, AI-powered tools are helping analysts and engineers move from raw data to actionable insights with minimal manual coding. Python remains the backbone of modern data analysis, and the rise of intelligent automation tools is transforming traditional pipelines into faster, smarter, and more scalable systems.

This guide explores the top AI tools for automating Python data analysis pipelines, along with practical use cases, benefits, and a structured content silo plan to build topical authority.

Why Automate Python Data Analysis Pipelines?

A Python data pipeline is a sequence of steps that takes data from ingestion to final insights. Traditionally, these pipelines required significant manual effort for tasks such as:

- Data cleaning

- Feature engineering

- Model selection

- Visualization

- Reporting

AI tools now automate many of these steps, reducing development time and improving consistency.

Key Benefits of AI-Powered Automation

- Faster analysis cycles

- Reduced coding workload

- Automated feature selection

- Improved model accuracy

- Scalable and repeatable workflows

Automation allows data professionals to focus more on strategy, interpretation, and decision-making rather than repetitive tasks.

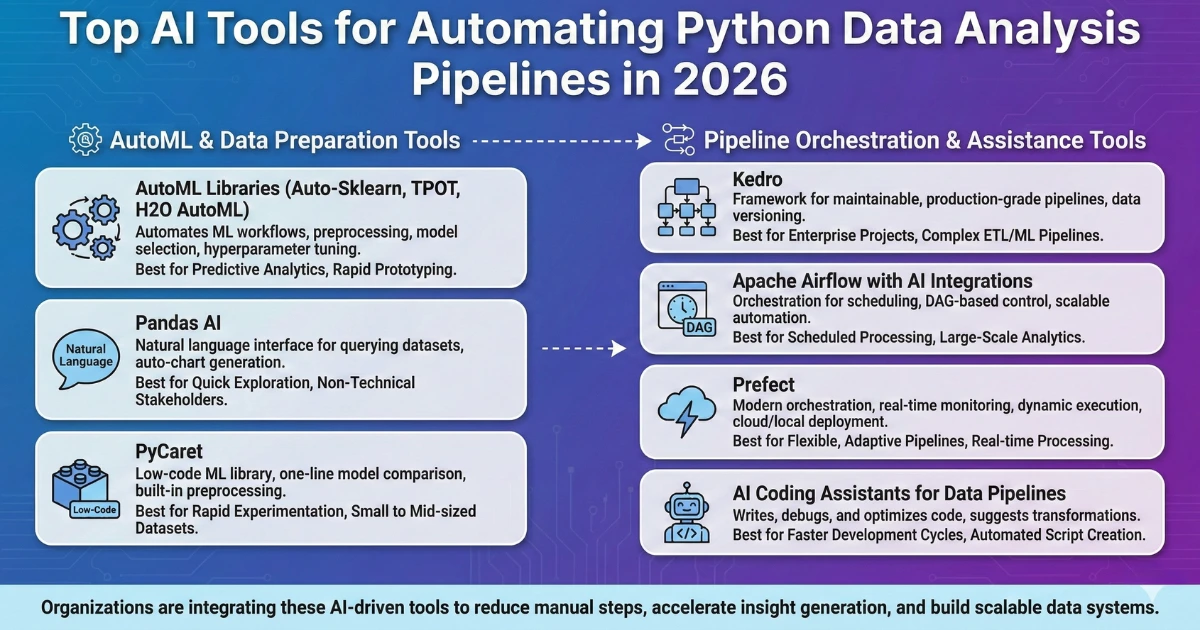

Top AI Tools for Automating Python Data Analysis Pipelines

1. AutoML Libraries (Auto-Sklearn, TPOT, H2O AutoML)

AutoML tools automate machine learning workflows, including preprocessing, model selection, and hyperparameter tuning.

Core capabilities:

- Automatic feature engineering

- Model comparison

- Hyperparameter optimization

- Pipeline generation

Best use cases:

- Predictive analytics

- Rapid model prototyping

- Teams with limited ML expertise

2. Pandas AI

Pandas AI adds a natural language interface to the widely used Pandas library. It allows users to query datasets using plain English instead of writing complex code.

Core capabilities:

- Natural language data queries

- Automatic chart generation

- Code suggestions for analysis

- Fast exploratory data analysis

Best use cases:

- Quick data exploration

- Non-technical stakeholders

- Rapid insight generation

3. PyCaret

PyCaret is a low-code machine learning library that automates the entire modeling process with minimal code.

Core capabilities:

- One-line model comparison

- Built-in preprocessing

- Automated feature engineering

- Easy deployment

Best use cases:

- Rapid experimentation

- Small to mid-sized datasets

- Production-ready models

4. Kedro

Kedro is a Python framework designed to create production-grade, maintainable data pipelines.

Core capabilities:

- Modular pipeline architecture

- Reproducible workflows

- Data versioning integration

- Visualization of pipeline structure

Best use cases:

- Enterprise data projects

- Complex ETL and ML pipelines

- Team-based development

5. Apache Airflow with AI Integrations

Apache Airflow is one of the most widely used orchestration tools for scheduling and automating workflows.

Core capabilities:

- Task scheduling

- DAG-based pipeline control

- Integration with ML models

- Scalable automation

Best use cases:

- Scheduled data processing

- Large-scale analytics pipelines

- Production environments

6. Prefect

Prefect is a modern orchestration platform that simplifies Python pipeline automation.

Core capabilities:

- Real-time pipeline monitoring

- Dynamic workflow execution

- Cloud and local deployment

- Event-driven automation

Best use cases:

- Flexible, adaptive pipelines

- Real-time data processing

- Cloud-native workflows

7. AI Coding Assistants for Data Pipelines

AI coding assistants are becoming central to automated Python workflows. These tools can write, debug, and optimize data pipeline code.

Core capabilities:

- Code generation

- Automated debugging

- Data transformation suggestions

- Pipeline optimization

Best use cases:

- Faster development cycles

- Automated script creation

- Rapid prototyping

Organizations such as Exotica AI Solutions are increasingly integrating AI-driven automation into data workflows, helping teams reduce manual steps and accelerate insight generation.

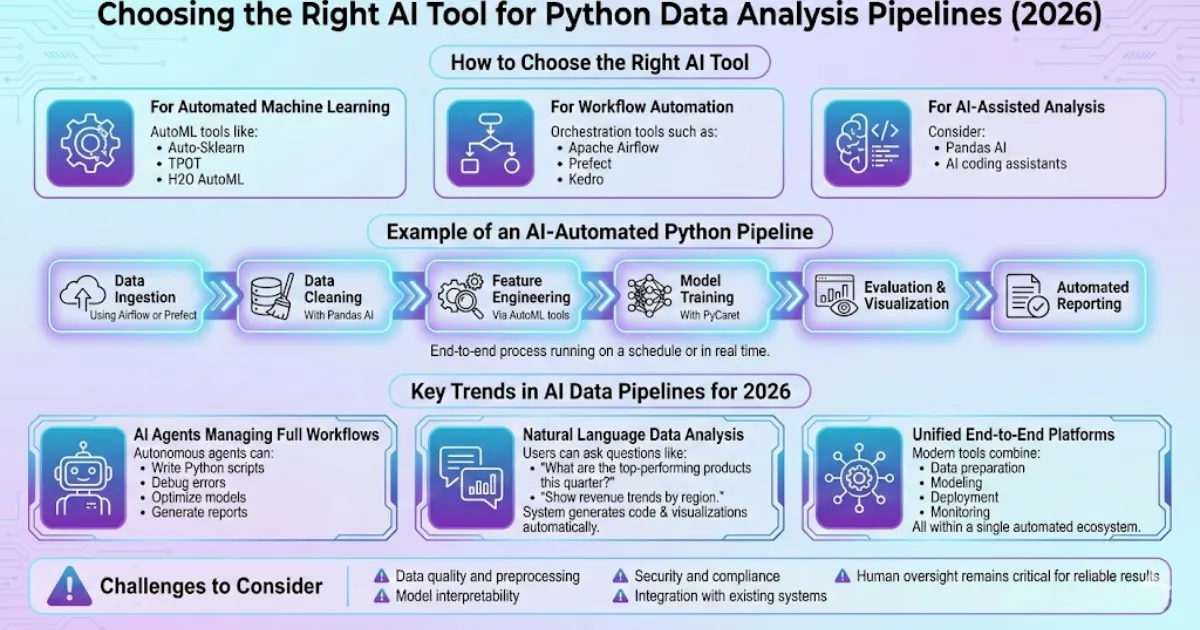

How to Choose the Right AI Tool

The best tool depends on your specific goals.

For Automated Machine Learning

Choose AutoML tools like:

- Auto-Sklearn

- TPOT

- H2O AutoML

For Workflow Automation

Use orchestration tools such as:

- Apache Airflow

- Prefect

- Kedro

For AI-Assisted Analysis

Consider:

- Pandas AI

- AI coding assistants/li>

Example of an AI-Automated Python Pipeline

A typical automated pipeline may include:

- Data ingestion using Airflow or Prefect

- Data cleaning with Pandas AI

- Feature engineering via AutoML tools

- Model training with PyCaret

- Evaluation and visualization

- Automated reporting

This end-to-end process can run on a schedule or in real time, depending on the use case.

Key Trends in AI Data Pipelines for 2026

AI Agents Managing Full Workflows

Autonomous agents can:

- Write Python scripts

- Debug errors

- Optimize models

- Generate reports

Natural Language Data Analysis

Users can ask questions like:

- “What are the top-performing products this quarter?”

- “Show revenue trends by region.”

The system generates code and visualizations automatically.

Unified End-to-End Platforms

Modern tools now combine:

- Data preparation

- Modeling

- Deployment

- Monitoring

All within a single automated ecosystem.

Challenges to Consider

While automation offers major advantages, there are still important considerations:

- Data quality and preprocessing

- Model interpretability

- Security and complianc

- Integration with existing systems

Human oversight remains critical for reliable results.

Frequently Asked Questions

Final Thoughts

AI tools are transforming how Python data analysis pipelines are built and maintained. Automation reduces manual coding, speeds up workflows, and enables teams to deliver insights faster. From AutoML libraries to orchestration platforms and AI assistants, the modern data stack is becoming increasingly intelligent.

Companies such as Exotica AI Solutions are adopting these advanced automation strategies to help businesses build scalable, efficient, and future-ready data systems.

With the right tools and a strong content silo strategy, organizations and websites alike can build authority in the rapidly evolving field of AI-driven data automation.