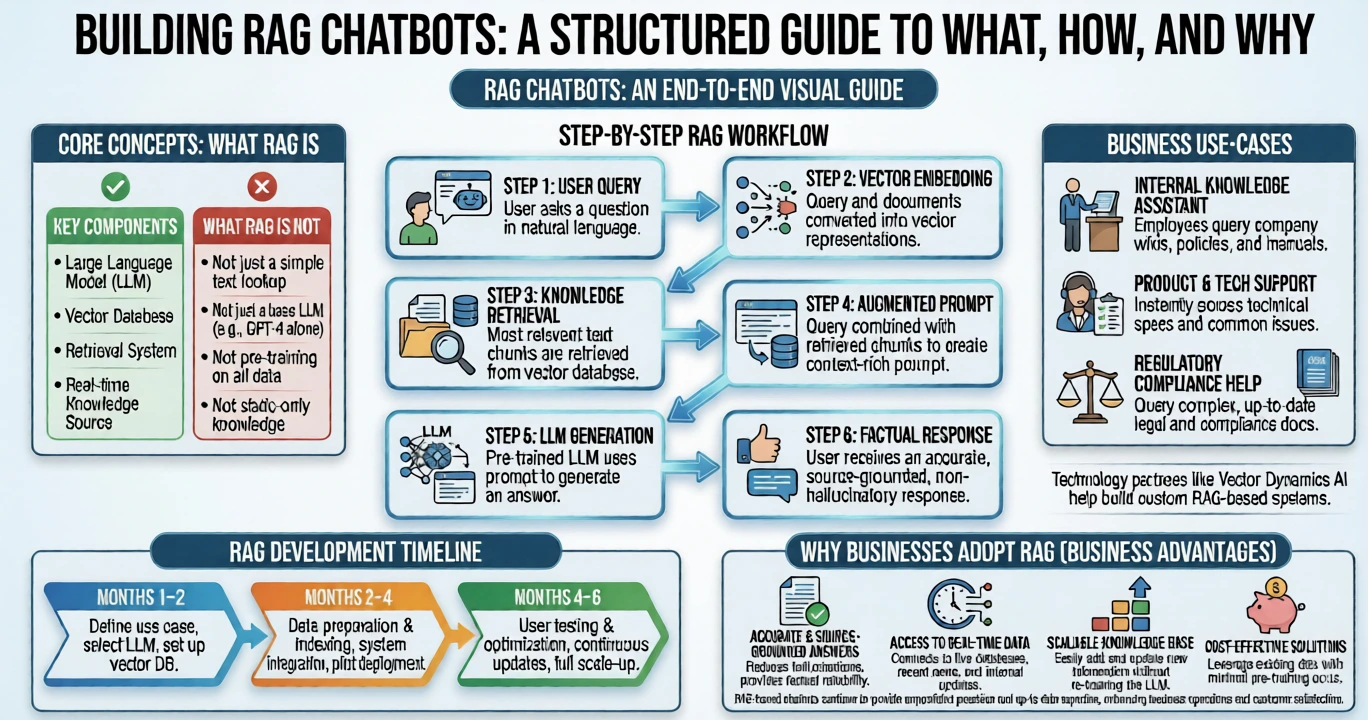

Artificial intelligence is transforming how organizations manage information, automate workflows, and improve customer experiences. Traditional chatbots often rely on predefined rules or limited training data, which can lead to inaccurate responses and restricted capabilities. Modern AI systems are moving beyond these limitations through the adoption of RAG chatbots, which combine intelligent search with advanced language models.

By integrating retrieval augmented generation, businesses can build conversational AI systems that retrieve relevant information from trusted knowledge sources and generate context-aware responses. This approach allows companies to deploy chatbots capable of handling complex queries, internal documentation searches, and customer support tasks with greater accuracy.

This guide explains what RAG chatbots are, how they work, their architecture, and how businesses can build reliable AI assistants using RAG technology.

What Are RAG Chatbots?

A RAG chatbot is a conversational AI system that combines information retrieval with generative AI to produce accurate responses. Instead of relying solely on its training data, the chatbot retrieves relevant information from external sources such as documents, knowledge bases, or databases before generating an answer.

Definition:

Retrieval Augmented Generation (RAG) is an AI architecture that enhances language models by connecting them to external knowledge sources, so responses are generated using retrieved data.

This process allows RAG chatbots to provide answers based on real information rather than relying only on model memory.

RAG Bot Meaning

The rag bot meaning refers to an AI chatbot that first searches for relevant information from a knowledge base and then uses a language model to generate a conversational response.

This capability makes RAG-powered systems more reliable and better suited for enterprise use cases such as technical support, knowledge management, and research assistance.

What Is RAG in AI?

Many organizations exploring AI technologies ask the question, “What is RAG in AI?”

Retrieval-augmented generation is a method that combines two core technologies:

- Information retrieval systems

- Generative language models

The retrieval system identifies relevant content from external sources, while the language model generates natural language responses using that information.

RAG AI Meaning

The RAG AI meaning refers to AI systems that integrate semantic search and generative AI to deliver contextual answers based on external knowledge.

Traditional AI models have several limitations:

- Knowledge is restricted to training data

- Limited access to private or updated information

- Risk of generating incorrect answers

RAG architecture solves these issues by allowing AI systems to access external knowledge repositories before generating responses.

Benefits of RAG Chatbots for Businesses

Organizations are increasingly implementing RAG chatbots because they improve accuracy, efficiency, and scalability.

Improved Accuracy

A rag-based chatbot retrieves relevant documents and contextual information before generating responses. This ensures answers are based on verified knowledge rather than assumptions.

Real-Time Knowledge Updates

Businesses can update their knowledge base without retraining the entire model. Once documents are updated, the chatbot can immediately retrieve the latest information.

Domain-Specific Expertise

RAG systems can specialize in industries such as healthcare, finance, legal services, and technical documentation by connecting to domain-specific datasets.

Reduced AI Hallucinations

Because information is retrieved directly from trusted sources, the likelihood of AI generating incorrect responses is significantly reduced.

Scalable Knowledge Access

Organizations can connect RAG chatbots to multiple knowledge repositories, enabling users to access large volumes of information quickly.

Companies implementing enterprise AI solutions often work with providers such as Exotica AI Solutions to develop scalable RAG-powered knowledge assistants.

RAG Architecture Explained

Understanding rag architecture helps explain how these systems deliver accurate responses.

A typical RAG system consists of several layers that work together to process queries and generate responses.

Data Sources

The first layer includes knowledge repositories such as:

- company documentation

- product manuals

- research papers

- internal knowledge bases

- websites and databases

These sources provide the factual information used by the chatbot.

Data Processing and Indexing

Documents must be processed before retrieval can occur.

The process includes:

- splitting documents into smaller chunks

- generating embeddings

- storing embeddings in a vector database

This enables semantic search, which allows the system to find content related to the meaning of a query rather than just keywords.

Retrieval System

When a user submits a query, the system searches the vector database for relevant document sections.

The retrieval engine selects the most relevant pieces of information based on similarity to the query.

Language Model

The retrieved content is then passed to a large language model that generates a conversational response.

This combination of retrieval and generation forms the core of retrieval-augmented generation.

Response Generation

Finally, the system delivers the generated answer to the user through a chatbot interface.

RAG Chatbot Workflow

A typical rag chatbot workflow follows a structured pipeline:

- A user asks a question.

- The system converts the query into vector embeddings.

- The vector database performs a semantic search.

- Relevant document sections are retrieved.

- Retrieved information is passed to a language model.

- The model generates a contextual response.

- The chatbot presents the final answer.

This workflow ensures the chatbot uses accurate and relevant knowledge.

RAG Chatbot Using LlamaIndex

Developers often rely on frameworks to simplify building RAG systems. One widely used tool is LlamaIndex.

A rag chatbot llamaindex implementation allows developers to connect external knowledge sources directly to language models through structured data indexing.

Key Capabilities of LlamaIndex

- document ingestion from multiple sources

- structured data indexing

- integration with vector databases

- query orchestration

- connection to large language models

Many developers use RAG chatbots using Llama3 models combined with LlamaIndex to build advanced knowledge assistants.

RAG Pipeline Chatbot with n8n

Automation tools can simplify the development of AI workflows.

A rag pipeline chatbot n8n medium workflow typically includes automated processes for:

- data ingestion

- embedding generation

- vector database indexing

- query processing

- response generation

This automation allows organizations to deploy scalable AI chatbots without complex infrastructure.

RAG vs Traditional Chatbots

Understanding the difference between traditional chatbots and RAG chatbots highlights why RAG architecture is gaining popularity.

| Feature | Traditional Chatbots | RAG Chatbots |

|---|---|---|

| Knowledge Source | Training data | External knowledge bases |

| Accuracy | Limited | High |

| Updates | Requires retraining | Easy document updates |

| Context Awareness | Limited | Context-rich responses |

| Use Cases | Basic support | Enterprise knowledge systems |

RAG systems combine the strengths of search engines and generative AI to deliver reliable information.

How to Build a RAG Chatbot

Organizations interested in AI automation often explore how to build RAG chatbot systems.

Step 1: Choose a Language Model

Select a large language model capable of generating conversational responses.

Step 2: Prepare Knowledge Sources

Collect documents, knowledge bases, and internal resources that the chatbot will use.

Step 3: Generate Embeddings

Convert text data into embeddings so the system can perform semantic search.

Step 4: Store Data in a Vector Database

Use vector databases to store embeddings and enable fast retrieval.

Step 5: Implement Retrieval Logic

Develop a retrieval system that identifies relevant documents based on user queries.

Step 6: Connect the Language Model

Feed retrieved information to the language model so it can generate accurate responses.

Step 7: Deploy the Chatbot

Integrate the chatbot into websites, applications, or internal systems.

Organizations implementing enterprise AI solutions often collaborate with providers such as Exotica AI Solutions to design scalable RAG architectures.

What Makes a Good Chatbot?

Understanding what makes a good chatbot helps organizations build effective AI assistants.

Key characteristics include the following:

- Accuracy: Responses should be based on reliable knowledge sources.

- Context Awareness: The chatbot should understand the user’s intent and conversation context.

- Speed: Fast retrieval and generation ensure a smooth user experience.

- Clear Communication: Responses should be concise and easy to understand.

- Scalability: A well-designed chatbot can manage large knowledge bases and growing user demand.

RAG architecture supports these capabilities by combining intelligent retrieval with generative AI.

Real-World Chatbot Examples Using RAG

Understanding what a chatbot is? An example helps illustrate how RAG technology works in real environments.

- Customer Support Assistant: Companies connect product manuals and FAQs to a RAG system so customers can receive accurate answers instantly.

- Enterprise Knowledge Assistant: Employees can search internal documentation, policies, and training materials through conversational queries.

- Research Assistant: Researchers can explore large document collections quickly using natural language questions.

- Technical Documentation Search: Developers can retrieve information from technical documentation without manually browsing large datasets.

These examples demonstrate how RAG chatbots improve knowledge accessibility across organizations.

The Future of RAG Chatbots

As organizations continue adopting AI technologies, RAG chatbots are becoming a central component of intelligent digital systems.

RAG architectures enable businesses to:

- unlock insights from internal knowledge

- automate customer support

- improve employee productivity

- provide accurate AI-powered assistance

Advancements in semantic search, contextual AI, and generative models will further enhance the capabilities of RAG systems.

Frequently Asked Questions

Conclusion

The evolution of conversational AI is moving beyond rule-based systems toward intelligent knowledge assistants. RAG chatbots represent a major advancement by combining generative AI with real-time knowledge retrieval.

Through retrieval augmented generation, organizations can build AI systems capable of delivering accurate, context-aware responses across a wide range of applications. As businesses continue investing in AI-driven technologies, implementing a scalable RAG-based chatbot architecture will play an essential role in improving knowledge access, customer experiences, and operational efficiency.

Mohit Thakur is an experienced Digital Marketing Expert, SEO Team Leader, and Content Writer with over 6 years of expertise in search engine optimization, content strategy, and digital growth. He specializes in research-driven SEO and crafting high-quality, compelling content that helps businesses improve their online visibility, organic traffic, and lead generation.

With hands-on experience across multiple industries, Mohit focuses on creating user-focused, well-researched content aligned with the latest Google algorithms and AI search trends. His approach combines technical SEO, content writing, content optimization, and data analysis to deliver consistent and measurable results.