The result: AI that actually works for your business, not just a chatbot wrapper around someone else’s model.

Every business leader has had the same conversation in the last 18 months.

Someone on your team demos ChatGPT. The room gets excited. You run a pilot. Results are mixed — the AI doesn’t know your products, misquotes your policies, and occasionally says things that would alarm your legal team. The project stalls. The enthusiasm fades.

What went wrong wasn’t AI. What went wrong was using a general-purpose model for a specific-purpose problem.

LLM development services exist to close that gap. Rather than forcing your business to work around the limitations of off-the-shelf models, specialist large language model development companies build AI systems trained on your data, aligned to your workflows, and deployed inside your infrastructure. The result is AI that performs like a subject matter expert — not a well-meaning intern who just started yesterday. According to Gartner, a significant share of generative AI proof-of-concept projects fail to reach production — the gap between demo and deployment is where specialist LLM development companies earn their value.

This guide covers everything a business leader needs to evaluate, select, and get real ROI from LLM development services in 2026.

In this guide

- What are LLM development services?

- Types of LLM development: fine-tuning, RAG, and custom builds

- What LLM development services actually include

- LLM use cases that deliver measurable ROI

- How to choose an LLM development company

- Build custom vs. use the API: the honest comparison

- What to expect: timelines and ROI

- Frequently asked questions

What Are LLM Development Services?

Large language models are AI systems trained on vast amounts of text data to understand, generate, and reason with natural language. GPT-4, Claude, Gemini, and Llama are all examples. They are powerful — but they are also general. They know a little about everything and a lot about nothing specific.

LLM development services take this foundation and make it specific. A specialist large language model development company works with your organization to:

- Identify the exact business problem that AI can solve — not just automate, but genuinely improve

- Select the right base model architecture for your use case and data requirements

- Fine-tune or augment the model with your proprietary data — product catalogs, legal documents, support tickets, clinical notes, financial records

- Build the infrastructure to deploy, monitor, and maintain the model in production

- Integrate the AI into your existing systems — CRM, ERP, communication platforms, and customer-facing interfaces

The output is not a chatbot that sits on your website. It is an AI system that has read every document your company has ever produced, understands your industry’s terminology, operates within your compliance requirements, and gets measurably better over time as it processes more of your data.

What does an LLM development company do?An LLM development company analyzes your business requirements, selects or builds a suitable language model architecture, trains or fine-tunes it on your proprietary data, integrates it into your existing systems, and deploys it into production with monitoring and ongoing optimization. They turn general-purpose AI into domain-specific business intelligence.

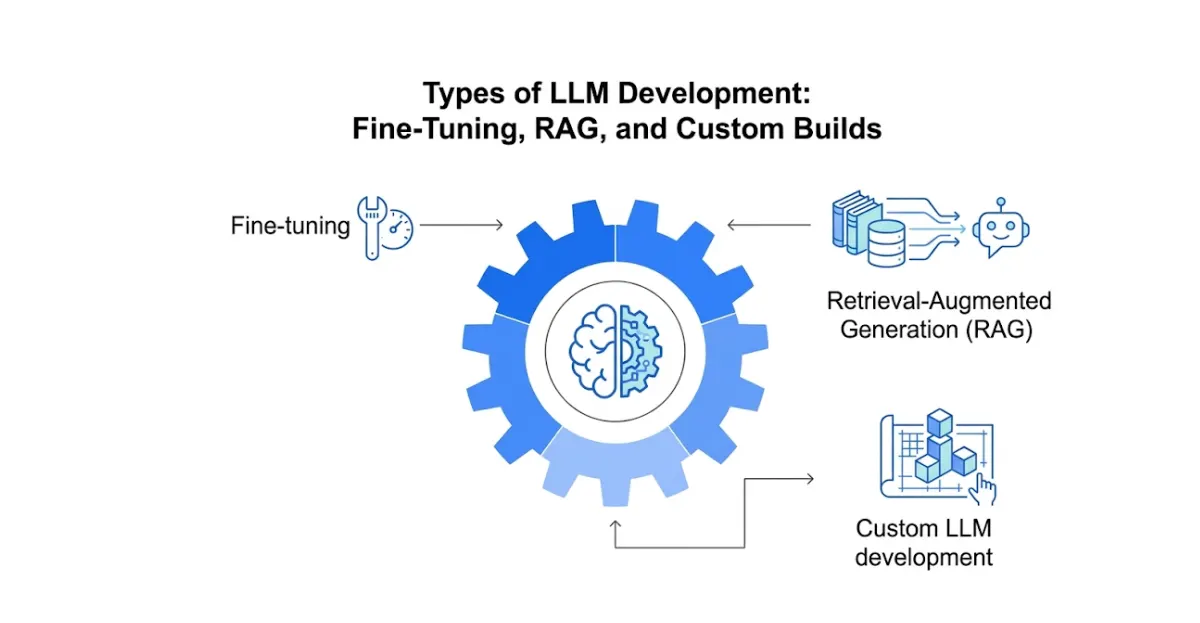

Types of LLM Development: Fine-Tuning, RAG, and Custom Builds

Not all LLM development is the same. Understanding the three primary approaches helps you match the right large language model services to your business problem — and avoid paying for complexity you do not need.

Fine-tuning

Fine-tuning takes an existing pre-trained model — GPT-4, Llama 3, Mistral — and continues training it on your specific dataset. The model learns the patterns, terminology, and context of your domain. A legal firm fine-tuning on 10 years of case files produces an AI that thinks like a paralegal. A retailer fine-tuning on product descriptions and customer queries produces an AI that sells like your best rep.

Best for: Businesses with large proprietary datasets, domain-specific language requirements, or compliance needs that require the model to behave in a specific way.

Retrieval-Augmented Generation (RAG)

RAG combines a general language model with a real-time retrieval system. Instead of baking knowledge into the model through training, RAG gives the model access to a searchable knowledge base — your documentation, your policies, your product data — at inference time. The model retrieves the relevant information and generates a response grounded in your actual content.

Our RAG-as-a-Service platform is one example of how this approach is deployed in production — giving businesses enterprise-grade AI knowledge retrieval without the cost and complexity of full model training. For a deeper comparison of how RAG fits within intelligent automation architectures, see our intelligent automation services guide.

Best for: Businesses that need AI to answer questions accurately from a defined knowledge base — customer support, internal knowledge management, document search, compliance Q&A.

Custom LLM development

Custom LLM development builds a model architecture from the ground up or significantly modifies an open-source foundation model for highly specialized requirements. This is the right approach for businesses in regulated industries — healthcare, finance, legal — where data cannot leave your infrastructure, where proprietary model architecture provides competitive advantage, or where the use case simply cannot be served by existing models.

Best for: Enterprises with strict data sovereignty requirements, unique domain languages (medical imaging reports, financial derivative contracts, specialized engineering documentation), or competitive incentive to own their AI infrastructure outright. Our custom Python development team builds the underlying ML infrastructure for organizations deploying self-hosted custom LLMs.

| Approach | Data requirement | Cost | Time to deploy | Best for |

|---|---|---|---|---|

| Fine-tuning | Medium — labeled domain data | Medium | 4–12 weeks | Domain-specific language, tone, behavior |

| RAG | Low — existing documents | Low–medium | 2–6 weeks | Knowledge retrieval, Q&A, document search |

| Custom build | High — proprietary corpus | High | 3–9 months | Regulated industries, proprietary architecture |

What LLM Development Services Actually Include

When you engage a professional large language model services provider, you are not buying a model. You are buying a structured delivery process. Here is what a full-service LLM development engagement should include at every stage.

1. Discovery and use case definition

Before any model is built, a qualified provider conducts a thorough discovery — mapping the business problem, identifying the data available, assessing infrastructure constraints, and defining success metrics. This step prevents the most expensive mistake in LLM projects: building the right model for the wrong problem.

2. Data assessment and preparation

LLMs are only as good as the data they learn from. A serious LLM development company audits your available data — its volume, quality, structure, and licensing — and prepares it for training or retrieval. This includes cleaning, labeling, formatting, and in some cases, synthetic data generation to fill gaps.

3. Model selection and architecture design

The choice of base model matters enormously. GPT-4-class models offer the broadest capability but come with cost, latency, and data privacy tradeoffs. Open-source models like Llama 3 or Mistral offer more control and can be self-hosted. Specialist LLM developers will select and justify the right architecture based on your requirements — not on what is easiest to implement. According to McKinsey’s generative AI research, organizations that invest in domain-specific model selection consistently outperform those that default to general-purpose APIs on accuracy-sensitive business tasks.

4. Training, fine-tuning, or RAG pipeline build

The core build phase — whether that is fine-tuning on proprietary data, building a RAG pipeline with vector embeddings and retrieval logic, or developing a custom model architecture. This phase requires deep ML engineering expertise and access to GPU compute infrastructure. Our workflow automation services integrate directly with LLM pipelines — connecting model outputs to downstream business systems and action workflows automatically.

5. Evaluation and safety testing

LLMs can produce incorrect, biased, or harmful outputs — a problem known as hallucination. Rigorous evaluation benchmarks the model against your ground truth data, tests edge cases, measures accuracy and relevance, and implements guardrails to prevent outputs that fall outside acceptable parameters.

6. Deployment and integration

Production deployment — connecting the model to your CRM, customer support platform, internal tools, or customer-facing interface — and setting up monitoring infrastructure to track performance, catch degradation, and alert your team to anomalies. For organizations deploying LLMs within telecom, healthcare, or logistics operations, our industry-specific practice areas cover the compliance architecture required: healthcare AI solutions, telecom AI, and logistics and supply chain AI.

7. Ongoing optimization and maintenance

Models drift as the world changes and as your business data evolves. The best LLM development services providers offer continuous monitoring, model updates, retraining cycles, and performance optimization as part of a managed service relationship.

LLM Use Cases That Deliver Measurable ROI in 2026

Customer support automation — LLMs trained on your product documentation, support history, and policy documents handle tier-1 and tier-2 support enquiries automatically — resolving 60–75% of inbound volume without human intervention. The remaining complex cases are routed to your team with full context summarized. Combined with our AI chatbot service and AI calling agent, businesses consistently cut support costs while improving customer satisfaction scores.

Internal knowledge management — Enterprises with large document repositories — legal contracts, HR policies, technical manuals, compliance documentation — deploy LLMs as internal search and Q&A systems. Employees get accurate, cited answers in seconds instead of spending 20 minutes hunting through SharePoint. Our RAG-as-a-Service platform is purpose-built for exactly this deployment pattern.

LLM agent development for sales — AI agents that research prospects, draft personalized outreach, qualify inbound leads through conversation, and update your CRM automatically. These are not simple chatbots — they are autonomous systems capable of multi-step reasoning and action across multiple tools. See how this works in practice in our business process automation tools guide.

Document processing and analysis — LLMs extract, classify, and summarize information from unstructured documents at scale. Invoice processing, contract review, medical record summarization, insurance claims triage — workflows that previously required teams of analysts now run in minutes. Our insurance AI solutions and healthcare AI solutions both deploy LLM-powered document processing as a core capability.

Code generation and developer tooling — Technology companies are using custom LLMs fine-tuned on their proprietary codebase to accelerate development velocity. Our custom Python development team builds the training pipelines and deployment infrastructure that make this possible at production scale.

Compliance and regulatory monitoring — Financial services and healthcare firms are deploying LLMs to continuously monitor communications, documentation, and workflows for regulatory compliance — flagging violations in real time rather than discovering them in quarterly audits. According to IBM’s AI automation research, organizations deploying AI for compliance monitoring reduce regulatory risk exposure by 40–60% within the first year of production deployment.

How to Choose an LLM Development Company

The market for LLM development services has expanded rapidly. Here is how to cut through the noise and identify a provider who will deliver real results.

1. Do they start with your business problem or with their preferred technology?

A provider who immediately recommends fine-tuning, RAG, or a specific model before deeply understanding your data and requirements is optimizing for their delivery speed, not your outcomes. Rigorous discovery is the foundation of every successful LLM development project.

2. Can they show production deployments — not just demos?

Ask for case studies with specific metrics: accuracy rates, volume handled, cost reduction, time to deployment. Demos are easy. Production systems that run reliably at scale are hard. According to Gartner, a significant portion of generative AI proof-of-concept projects are abandoned before reaching production — the providers who consistently get to production are a much smaller group.

3. How do they handle hallucination and safety?

Any LLM development company that cannot clearly explain their evaluation methodology, guardrail architecture, and ongoing monitoring approach is not ready for enterprise deployment. Hallucination is not a solved problem — it is an actively managed one.

4. What does post-deployment support look like?

Models require maintenance. Data drifts. Business requirements change. A provider who disappears after deployment is not a partner — they are a vendor. Ask specifically about SLAs, retraining cadence, and how they handle performance degradation.

5. Do they understand your industry’s regulatory environment?

Healthcare, finance, legal, and insurance all have compliance requirements that directly constrain what data can be used for training, where models can be hosted, and how outputs must be handled. Industry experience is not a nice-to-have — it is a prerequisite. Our healthcare AI, insurance AI, and IT services AI practice areas reflect exactly this depth of regulatory knowledge across the highest-compliance industries.

Build Custom vs. Use the API: The Honest Comparison

| Factor | Custom LLM development | Third-party API (GPT-4, Claude) |

|---|---|---|

| Data privacy | Full control — self-hosted | Data sent to third-party servers |

| Domain accuracy | High — trained on your data | General — not domain-specific |

| Cost at scale | Lower long-term | Higher — per-token pricing |

| Time to first output | Weeks to months | Days |

| Competitive advantage | High — proprietary asset | None — same tool competitors use |

| Vendor dependency | None | High — pricing and availability changes |

The API route is the right starting point for most businesses — it validates the use case quickly and cheaply before committing to custom development. The custom development route is the right long-term investment when the use case is proven, volume is high, data privacy is critical, or domain accuracy is a competitive differentiator. Our RAG-as-a-Service offering sits between these two approaches — delivering domain-specific AI accuracy without the full cost and timeline of custom model training.

What to Expect: Timelines and ROI

The businesses that see the strongest returns from LLM development share one common approach — they start with a narrow, high-value use case, validate ROI, and expand from there.

A RAG-based internal knowledge system can go from discovery to production in 4–6 weeks and immediately reduces time employees spend searching for information — a measurable, reportable improvement. A fine-tuned customer support model typically takes 8–12 weeks and reduces support ticket volume by 50–70% within the first 90 days of production.

Full custom LLM builds for regulated industries run 3–9 months and represent a strategic infrastructure investment rather than a quick win — the ROI case is built over 12–24 months through cost avoidance, risk reduction, and competitive differentiation. For LLM development services in healthcare, this includes HIPAA-compliant deployment architecture, clinical terminology fine-tuning, and integration with existing EHR systems. For LLM development services in financial services, it includes SOC 2 compliance, explainability requirements, and audit trail infrastructure. For more on how these sectors deploy AI at scale, see our digital process automation guide.

For businesses evaluating where to start, a focused discovery engagement with an experienced provider like Exotica AI Solutions typically identifies the highest-value use case, validates feasibility against your existing data, and produces a deployment roadmap — before any model development budget is committed.

Frequently Asked Questions

LLM development is not a technology decision. It is a strategic business decision. The organizations generating durable competitive advantage from AI in 2026 are not those who moved fastest to deploy a chatbot — they are those who identified the right use case, chose the right development approach, and built AI systems that compound in value as they process more of their business data.

The path from evaluating LLM development services to deploying a production system that delivers measurable ROI starts with one question: which problem in your business, if solved by AI, would create the most significant operational or revenue impact?

Answer that question clearly, and the rest of the development process follows. Ready to find yours?

Mohit Thakur is an experienced Digital Marketing Expert, SEO Team Leader, and Content Writer with over 6 years of expertise in search engine optimization, content strategy, and digital growth. He specializes in research-driven SEO and crafting high-quality, compelling content that helps businesses improve their online visibility, organic traffic, and lead generation.

With hands-on experience across multiple industries, Mohit focuses on creating user-focused, well-researched content aligned with the latest Google algorithms and AI search trends. His approach combines technical SEO, content writing, content optimization, and data analysis to deliver consistent and measurable results.